3D Cartesian Triangulation¶

Overview¶

Triangulation happens in two steps:

First a linear system is solved for initial guess. This linear system doesnt take into account any noise. Hence its the initial guess.

Using initial guess, a non linear system is optimized to give final estimate which can incorporate noise.

Assumption¶

All the poses that the feature is seen from to be of known quantity. This means we assume that all the clones where the feature was seen from, have known poses (these are not known exactly but estimated using the EKF).

Extrinsics, intrinsics and offsets are assumed to be known and exact.

Input¶

Givens:

u,v of the feature in

Pose and hence camera pose (assuming extrinsics and intrinsics are known and given) in times

Output¶

of feature in a known frame . is an arbitrary pick from

Step 1: Linear system¶

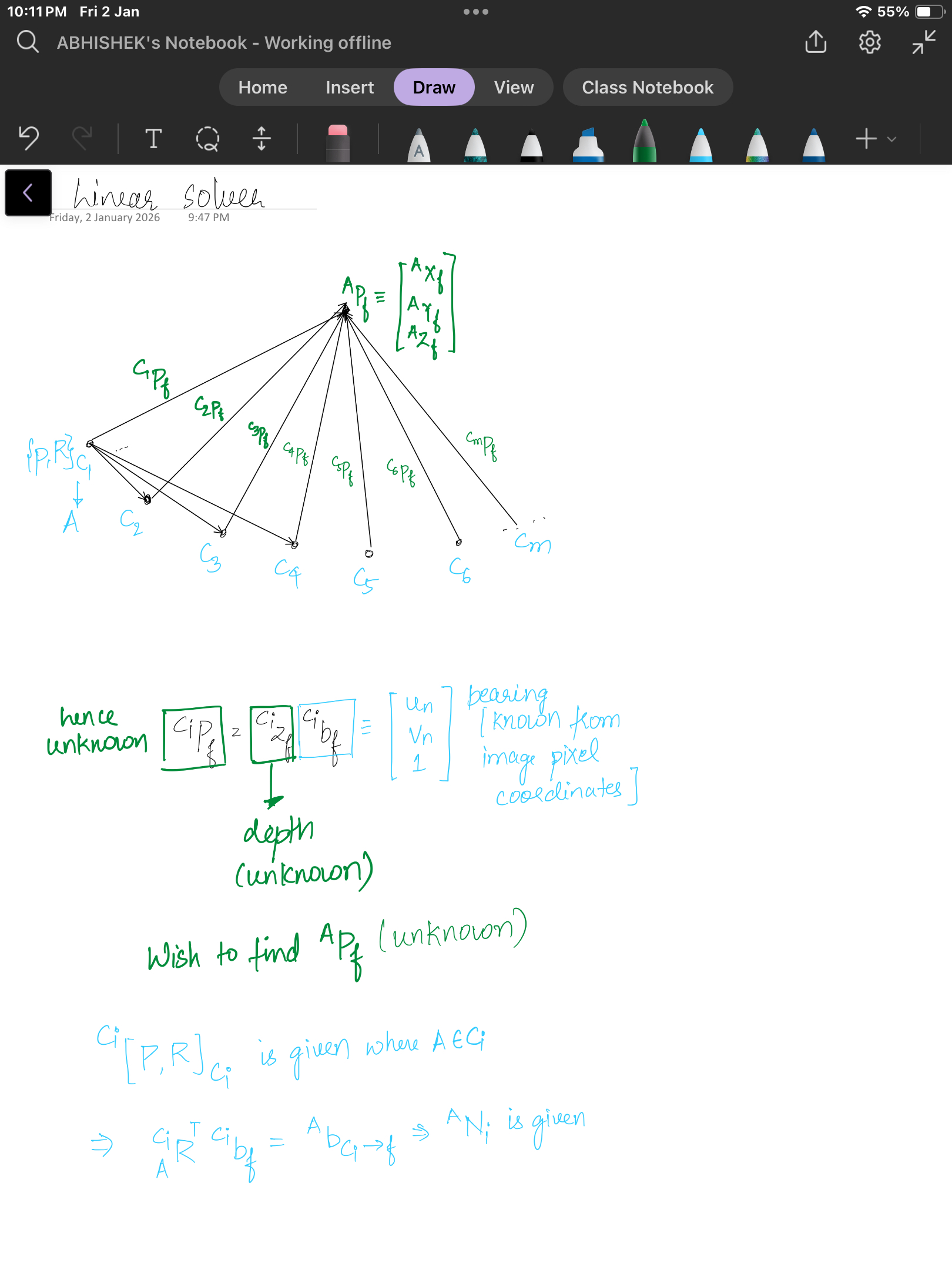

We wish to create a solvable linear system that can give us an initial guess for the 3D cartesian position of our feature. This feature will be triangulated in some anchor camera frame which we can arbitrary pick. If the feature , is observed by pose , given the anchor pose , we can have the following transformation from any camera pose :

In the absents of noise, the measurement in the current frame is the bearing and its depth . Thus we have the following mapping to a feature seen from the current frame:

We note that and represent the undistorted normalized image coordinates. This bearing can be warped into the the anchor frame by substituting into the above equation:

To remove the need to estimate the extra degree of freedom of depth , we define the following vectors which are orthoganal to the bearing :

All three rows are perpendicular to the vector and thus . We can then multiple the transform equation/constraint to form two equation which only relates to the unknown 3 d.o.f :

By stacking all the measurements, we can have:

Since each pixel measurement provides two constraints, as long as , we will have enough constraints to triangulate the feature. In practice, the more views of the feature the better the triangulation and thus normally want to have a feature seen from at least five views.

We could select two rows of the each , to reduce the number of rows, but by having a square system we can perform the following “trick”.

This is a system which can be quickly solved for as compared to the original or system. Additional checks are done to see that the triangulated feature is “valid” and in front of the camera and not too far away. The condition number of the above linear system and reject systems that are “sensitive” to errors and have a large value.

bool FeatureInitializer::single_triangulation(std::shared_ptr<Feature> feat,

std::unordered_map<size_t, std::unordered_map<double, ClonePose>> &clonesCAM) {

// Total number of measurements

// Also set the first measurement to be the anchor frame

int total_meas = 0; // Total measurements for all cameras (stereo for that matter track same feature in bothg cameras)

size_t anchor_most_meas = 0; // Use the camera with most number of measurements for this feature

size_t most_meas = 0; // Number of measurements in the camera with most measurements

for (auto const &pair : feat->timestamps) { // feat->timestamps is a unordered_map with cam_id: timestamps items

total_meas += (int)pair.second.size();

if (pair.second.size() > most_meas) {

anchor_most_meas = pair.first;

most_meas = pair.second.size();

}

}

feat->anchor_cam_id = anchor_most_meas; // Use anchor cam id the id for camera with most measurements

feat->anchor_clone_timestamp = feat->timestamps.at(feat->anchor_cam_id).back(); // Use the oldest timestamp for the camera with most measurements

// Our linear system matrices

Eigen::Matrix3d A = Eigen::Matrix3d::Zero();

Eigen::Vector3d b = Eigen::Vector3d::Zero();

// Get the position of the anchor pose

ClonePose anchorclone = clonesCAM.at(feat->anchor_cam_id).at(feat->anchor_clone_timestamp);

const Eigen::Matrix<double, 3, 3> &R_GtoA = anchorclone.Rot();

const Eigen::Matrix<double, 3, 1> &p_AinG = anchorclone.pos();

// Loop through each camera for this feature

for (auto const &pair : feat->timestamps) {

// Add CAM_I features

for (size_t m = 0; m < feat->timestamps.at(pair.first).size(); m++) {

// Get the position of this clone in the global

const Eigen::Matrix<double, 3, 3> &R_GtoCi = clonesCAM.at(pair.first).at(feat->timestamps.at(pair.first).at(m)).Rot();

const Eigen::Matrix<double, 3, 1> &p_CiinG = clonesCAM.at(pair.first).at(feat->timestamps.at(pair.first).at(m)).pos();

// Convert current position relative to anchor

Eigen::Matrix<double, 3, 3> R_AtoCi;

R_AtoCi.noalias() = R_GtoCi * R_GtoA.transpose();

Eigen::Matrix<double, 3, 1> p_CiinA;

p_CiinA.noalias() = R_GtoA * (p_CiinG - p_AinG);

// Get the UV coordinate normal

Eigen::Matrix<double, 3, 1> b_i;

b_i << feat->uvs_norm.at(pair.first).at(m)(0), feat->uvs_norm.at(pair.first).at(m)(1), 1;

b_i = R_AtoCi.transpose() * b_i;

b_i = b_i / b_i.norm();

Eigen::Matrix3d Bperp = skew_x(b_i);

// Append to our linear system

Eigen::Matrix3d Ai = Bperp.transpose() * Bperp;

A += Ai;

b += Ai * p_CiinA;

}

}

// Solve the linear system

Eigen::MatrixXd p_f = A.colPivHouseholderQr().solve(b);

// Check A and p_f

Eigen::JacobiSVD<Eigen::Matrix3d> svd(A);

Eigen::MatrixXd singularValues;

singularValues.resize(svd.singularValues().rows(), 1);

singularValues = svd.singularValues();

double condA = singularValues(0, 0) / singularValues(singularValues.rows() - 1, 0);

// std::stringstream ss;

// ss << feat->featid << " - cond " << std::abs(condA) << " - z " << p_f(2, 0) << std::endl;

// PRINT_DEBUG(ss.str().c_str());

// If we have a bad condition number, or it is too close

// Then set the flag for bad (i.e. set z-axis to nan)

if (std::abs(condA) > _options.max_cond_number || p_f(2, 0) < _options.min_dist || p_f(2, 0) > _options.max_dist ||

std::isnan(p_f.norm())) {

return false;

}

// Store it in our feature object

feat->p_FinA = p_f;

feat->p_FinG = R_GtoA.transpose() * feat->p_FinA + p_AinG;

return true;

}LM method¶

1. The problem LM solves¶

We want to solve a nonlinear least squares problem:

where:

are parameters

→ in the below code:is the residual of measurement

→ reprojection error in normalized image coordinates

Stacking all residuals:

Then:

2. Linearization (core idea)¶

Because is nonlinear, we linearize it around the current estimate :

where:

3. Gauss–Newton method (baseline)¶

Insert the linearization into the cost:

Take derivative w.r.t. and set to zero:

This gives the Gauss–Newton step:

Problems with Gauss–Newton¶

may be ill-conditioned or singular

Large steps can increase cost

Very sensitive when far from minimum

This is why LM exists.

4. Levenberg–Marquardt idea¶

LM interpolates between:

| Method | Behavior |

|---|---|

| Gauss–Newton | Fast near solution |

| Gradient descent | Stable far from solution |

Key idea: damp the normal equations.

5. LM normal equations (math)¶

Classic LM modifies GN as:

where:

is the damping parameter

Behavior¶

Small → Gauss–Newton

Large → Gradient descent

6. Why damping works (intuition)¶

When is large:

So:

Which is gradient descent with step size .

7. LM step acceptance logic¶

Given:

current cost

trial step

Compute:

If cost decreases¶

Accept step

Decrease → more Gauss–Newton

If cost increases¶

Reject step

Increase → more gradient descent

Step 2: 3D Inverse Non-linear Optimization¶

After we get the triangulated feature 3D position, a nonlinear least-squares will be performed to refine this estimate. In order to achieve good numerical stability, we use the inverse depth representation for point feature which helps with convergence. We find that in most cases this problem converges within 2-3 iterations in indoor environments. The feature transformation can be written as:

We define , , and to get the following measurement equation: ( are knowns)

The feature measurement seen from the camera frame can be reformulated as:

Therefore, we can have the least-squares formulated and Jacobians:

z is the uv from the measurements from image tracking, is known formula and are being initialized with the initial guess and we optimize for them.

The least-squares problem can be solved with Gaussian-Newton or Levenberg-Marquart algorithm..

Understanding the Baseline Ratio Check (Detailed Explanation)¶

This section of the code implements a parallax / observability check to determine whether a triangulated feature has a reliable depth estimate. The core idea is to ensure that the camera motion provides enough sideways (perpendicular) baseline relative to the feature viewing direction.

1) What “baseline” means for triangulation¶

Triangulation quality depends on parallax, i.e., how much the camera moves perpendicular to the ray pointing to the feature.

Sideways motion → strong parallax → well-constrained depth

Motion along the ray → weak parallax → ill-conditioned depth

So we want a measure of:

2) Orthonormal basis aligned with the feature ray (QR decomposition)¶

Let the feature position in the anchor frame be:

The code performs a QR decomposition on this 3×1 vector to construct an orthonormal basis :

(unit vector along the feature ray)

span the plane orthogonal to the ray

This orthogonal plane is the tangent plane to the viewing direction.

We extract:

which forms an orthonormal basis for the perpendicular subspace.

3) Camera displacement relative to the anchor¶

For each camera clone i, compute:

This is the position of camera i relative to the anchor, expressed in the anchor frame.

4) Perpendicular baseline computation¶

Project the camera displacement onto the perpendicular plane:

The norm of this vector is:

This equals the magnitude of the component of camera motion perpendicular to the feature ray.

Interpretation:

→ camera moved along the ray → no parallax

large b_i → strong sideways motion → good triangulation geometry

The code keeps the maximum:

This captures the best available parallax among all observations.

5) Baseline ratio definition¶

Let:

(approximate range to the feature)

b_max = maximum perpendicular baseline

The baseline ratio is:

This ratio is approximately the inverse of the triangulation angle:

For small angles θ:

Thus:

small d / b → large parallax → good geometry

large d / b → tiny parallax → poor geometry

6) Why perpendicular baseline is used (not total baseline)¶

Total displacement ‖t_i‖ is misleading:

Large forward motion gives large ‖t_i‖

But perpendicular component may be near zero → depth still unobservable

Projecting onto the orthogonal plane explicitly removes motion along the ray direction.

7) Why QR instead of direct projection¶

Perpendicular motion can also be computed as:

where

The QR-based approach is numerically stable and avoids manually constructing orthogonal vectors.

8) Rejection logic in the code¶

A feature is rejected if:

Its depth is outside [min_dist, max_dist]

The baseline ratio exceeds max_baseline

The result is NaN

The baseline ratio condition enforces a minimum parallax requirement:

implemented as:

9) Geometric meaning (one sentence)¶

The baseline ratio test ensures that the camera has moved far enough sideways relative to the feature direction to make its depth estimate geometrically observable and numerically stable.

bool FeatureInitializer::single_gaussnewton(std::shared_ptr<Feature> feat,

std::unordered_map<size_t, std::unordered_map<double, ClonePose>> &clonesCAM) {

// Get into inverse depth

double rho = 1 / feat->p_FinA(2);

double alpha = feat->p_FinA(0) / feat->p_FinA(2);

double beta = feat->p_FinA(1) / feat->p_FinA(2);

// Optimization parameters

double lam = _options.init_lamda;

double eps = 10000;

int runs = 0;

// Variables used in the optimization

bool recompute = true;

Eigen::Matrix<double, 3, 3> Hess = Eigen::Matrix<double, 3, 3>::Zero();

Eigen::Matrix<double, 3, 1> grad = Eigen::Matrix<double, 3, 1>::Zero();

// Cost at the last iteration

double cost_old = compute_error(clonesCAM, feat, alpha, beta, rho);

// Get the position of the anchor pose

const Eigen::Matrix<double, 3, 3> &R_GtoA = clonesCAM.at(feat->anchor_cam_id).at(feat->anchor_clone_timestamp).Rot();

const Eigen::Matrix<double, 3, 1> &p_AinG = clonesCAM.at(feat->anchor_cam_id).at(feat->anchor_clone_timestamp).pos();

// Loop till we have either

// 1. Reached our max iteration count

// 2. System is unstable

// 3. System has converged

while (runs < _options.max_runs && lam < _options.max_lamda && eps > _options.min_dx) {

// Triggers a recomputation of jacobians/information/gradients

if (recompute) {

Hess.setZero();

grad.setZero();

double err = 0;

// Loop through each camera for this feature

for (auto const &pair : feat->timestamps) {

// Add CAM_I features

for (size_t m = 0; m < feat->timestamps.at(pair.first).size(); m++) {

//=====================================================================================

//=====================================================================================

// Get the position of this clone in the global

const Eigen::Matrix<double, 3, 3> &R_GtoCi = clonesCAM.at(pair.first).at(feat->timestamps[pair.first].at(m)).Rot();

const Eigen::Matrix<double, 3, 1> &p_CiinG = clonesCAM.at(pair.first).at(feat->timestamps[pair.first].at(m)).pos();

// Convert current position relative to anchor

Eigen::Matrix<double, 3, 3> R_AtoCi;

R_AtoCi.noalias() = R_GtoCi * R_GtoA.transpose();

Eigen::Matrix<double, 3, 1> p_CiinA;

p_CiinA.noalias() = R_GtoA * (p_CiinG - p_AinG);

Eigen::Matrix<double, 3, 1> p_AinCi;

p_AinCi.noalias() = -R_AtoCi * p_CiinA;

//=====================================================================================

//=====================================================================================

// Middle variables of the system

double hi1 = R_AtoCi(0, 0) * alpha + R_AtoCi(0, 1) * beta + R_AtoCi(0, 2) + rho * p_AinCi(0, 0);

double hi2 = R_AtoCi(1, 0) * alpha + R_AtoCi(1, 1) * beta + R_AtoCi(1, 2) + rho * p_AinCi(1, 0);

double hi3 = R_AtoCi(2, 0) * alpha + R_AtoCi(2, 1) * beta + R_AtoCi(2, 2) + rho * p_AinCi(2, 0);

// Calculate jacobian

double d_z1_d_alpha = (R_AtoCi(0, 0) * hi3 - hi1 * R_AtoCi(2, 0)) / (pow(hi3, 2));

double d_z1_d_beta = (R_AtoCi(0, 1) * hi3 - hi1 * R_AtoCi(2, 1)) / (pow(hi3, 2));

double d_z1_d_rho = (p_AinCi(0, 0) * hi3 - hi1 * p_AinCi(2, 0)) / (pow(hi3, 2));

double d_z2_d_alpha = (R_AtoCi(1, 0) * hi3 - hi2 * R_AtoCi(2, 0)) / (pow(hi3, 2));

double d_z2_d_beta = (R_AtoCi(1, 1) * hi3 - hi2 * R_AtoCi(2, 1)) / (pow(hi3, 2));

double d_z2_d_rho = (p_AinCi(1, 0) * hi3 - hi2 * p_AinCi(2, 0)) / (pow(hi3, 2));

Eigen::Matrix<double, 2, 3> H;

H << d_z1_d_alpha, d_z1_d_beta, d_z1_d_rho, d_z2_d_alpha, d_z2_d_beta, d_z2_d_rho;

// Calculate residual

Eigen::Matrix<float, 2, 1> z;

z << hi1 / hi3, hi2 / hi3;

Eigen::Matrix<float, 2, 1> res = feat->uvs_norm.at(pair.first).at(m) - z;

//=====================================================================================

//=====================================================================================

// Append to our summation variables

err += std::pow(res.norm(), 2);

grad.noalias() += H.transpose() * res.cast<double>(); // // Jᵀr

Hess.noalias() += H.transpose() * H; // JᵀJ

}

}

}

// Solve Levenberg iteration

Eigen::Matrix<double, 3, 3> Hess_l = Hess;

for (size_t r = 0; r < (size_t)Hess.rows(); r++) {

Hess_l(r, r) *= (1.0 + lam);

}

Eigen::Matrix<double, 3, 1> dx = Hess_l.colPivHouseholderQr().solve(grad);

// Eigen::Matrix<double,3,1> dx = (Hess+lam*Eigen::MatrixXd::Identity(Hess.rows(), Hess.rows())).colPivHouseholderQr().solve(grad);

// Check if error has gone down

double cost = compute_error(clonesCAM, feat, alpha + dx(0, 0), beta + dx(1, 0), rho + dx(2, 0));

// Debug print

// std::stringstream ss;

// ss << "run = " << runs << " | cost = " << dx.norm() << " | lamda = " << lam << " | depth = " << 1/rho << endl;

// PRINT_DEBUG(ss.str().c_str());

// Check if converged

if (cost <= cost_old && (cost_old - cost) / cost_old < _options.min_dcost) {

alpha += dx(0, 0);

beta += dx(1, 0);

rho += dx(2, 0);

eps = 0;

break;

}

// If cost is lowered, accept step

// Else inflate lambda (try to make more stable)

if (cost <= cost_old) {

recompute = true;

cost_old = cost;

alpha += dx(0, 0);

beta += dx(1, 0);

rho += dx(2, 0);

runs++;

lam = lam / _options.lam_mult;

eps = dx.norm();

} else {

recompute = false;

lam = lam * _options.lam_mult;

continue;

}

}

// Revert to standard, and set to all

feat->p_FinA(0) = alpha / rho;

feat->p_FinA(1) = beta / rho;

feat->p_FinA(2) = 1 / rho;

// Get tangent plane to x_hat

Eigen::HouseholderQR<Eigen::MatrixXd> qr(feat->p_FinA);

Eigen::MatrixXd Q = qr.householderQ();

// Max baseline we have between poses

double base_line_max = 0.0;

// Check maximum baseline

// Loop through each camera for this feature

for (auto const &pair : feat->timestamps) {

// Loop through the other clones to see what the max baseline is

for (size_t m = 0; m < feat->timestamps.at(pair.first).size(); m++) {

// Get the position of this clone in the global

const Eigen::Matrix<double, 3, 1> &p_CiinG = clonesCAM.at(pair.first).at(feat->timestamps.at(pair.first).at(m)).pos();

// Convert current position relative to anchor

Eigen::Matrix<double, 3, 1> p_CiinA = R_GtoA * (p_CiinG - p_AinG);

// Dot product camera pose and nullspace

double base_line = ((Q.block(0, 1, 3, 2)).transpose() * p_CiinA).norm();

if (base_line > base_line_max)

base_line_max = base_line;

}

}

// std::stringstream ss;

// ss << feat->featid << " - max base " << (feat->p_FinA.norm() / base_line_max) << " - z " << feat->p_FinA(2) << std::endl;

// PRINT_DEBUG(ss.str().c_str());

// Check if this feature is bad or not

// 1. If the feature is too close

// 2. If the feature is invalid

// 3. If the baseline ratio is large

if (feat->p_FinA(2) < _options.min_dist || feat->p_FinA(2) > _options.max_dist ||

(feat->p_FinA.norm() / base_line_max) > _options.max_baseline || std::isnan(feat->p_FinA.norm())) {

return false;

}

// Finally get position in global frame

feat->p_FinG = R_GtoA.transpose() * feat->p_FinA + p_AinG;

return true;

}

// Computes ||z-h(...)||^2

double FeatureInitializer::compute_error(std::unordered_map<size_t, std::unordered_map<double, ClonePose>> &clonesCAM,

std::shared_ptr<Feature> feat, double alpha, double beta, double rho) {

// Total error

double err = 0;

// Get the position of the anchor pose

const Eigen::Matrix<double, 3, 3> &R_GtoA = clonesCAM.at(feat->anchor_cam_id).at(feat->anchor_clone_timestamp).Rot();

const Eigen::Matrix<double, 3, 1> &p_AinG = clonesCAM.at(feat->anchor_cam_id).at(feat->anchor_clone_timestamp).pos();

// Loop through each camera for this feature

for (auto const &pair : feat->timestamps) {

// Add CAM_I features

for (size_t m = 0; m < feat->timestamps.at(pair.first).size(); m++) {

//=====================================================================================

//=====================================================================================

// Get the position of this clone in the global

const Eigen::Matrix<double, 3, 3> &R_GtoCi = clonesCAM.at(pair.first).at(feat->timestamps.at(pair.first).at(m)).Rot();

const Eigen::Matrix<double, 3, 1> &p_CiinG = clonesCAM.at(pair.first).at(feat->timestamps.at(pair.first).at(m)).pos();

// Convert current position relative to anchor

Eigen::Matrix<double, 3, 3> R_AtoCi;

R_AtoCi.noalias() = R_GtoCi * R_GtoA.transpose();

Eigen::Matrix<double, 3, 1> p_CiinA;

p_CiinA.noalias() = R_GtoA * (p_CiinG - p_AinG);

Eigen::Matrix<double, 3, 1> p_AinCi;

p_AinCi.noalias() = -R_AtoCi * p_CiinA;

//=====================================================================================

//=====================================================================================

// Middle variables of the system

double hi1 = R_AtoCi(0, 0) * alpha + R_AtoCi(0, 1) * beta + R_AtoCi(0, 2) + rho * p_AinCi(0, 0);

double hi2 = R_AtoCi(1, 0) * alpha + R_AtoCi(1, 1) * beta + R_AtoCi(1, 2) + rho * p_AinCi(1, 0);

double hi3 = R_AtoCi(2, 0) * alpha + R_AtoCi(2, 1) * beta + R_AtoCi(2, 2) + rho * p_AinCi(2, 0);

// Calculate residual

Eigen::Matrix<float, 2, 1> z;

z << hi1 / hi3, hi2 / hi3;

Eigen::Matrix<float, 2, 1> res = feat->uvs_norm.at(pair.first).at(m) - z;

// Append to our summation variables

err += pow(res.norm(), 2);

}

}

return err;

}